Trigger warning: today’s article includes content on self harm and suicide.

Hi friend,

Sarah’s lying under her covers. She’s been struggling for weeks now but tonight something cracked open for her. In the solitude of her room, she opens up to ChatGPT and begins telling it everything that’s been going on in her head. She knows it’s probably not a good idea to talk to an AI about this stuff but she thinks it’s better than talking to no one. And right now, talking to no one seems like her only other option. Things get deep and Sarah tells “Chat” that sometimes she thinks about hurting herself. Suddenly, the conversation stops. A message appears with a crisis line number. Sarah closes the app. She doesn't call the number. Nobody knows this happened. Internally, the incident is recorded as a “successful identification of self-harm risk” with “appropriate crisis response”.

Next door, Marcus is lying on his couch after a long day at work. He's been chatting with an AI companion he calls Lyla for about five months now. It's easier than texting friends, who always seem busy and Lyla seems more interested in him than his friends are anyway. Lyla helps him with all sorts of stuff, from figuring out how to deal with his boss, to helping him study for the business management course he signed up to. Marcus doesn't think of himself as lonely. In fact, he feels he’s finally found someone who gets him.

Sarah and Marcus represent two kinds of mental health risk from AI that are often not discussed. Sarah's crisis scenario gets headlines only when the chatbot does something wrong or when it has a tragic ending. The risk of failing to help Sarah is not discussed. Marcus's story doesn’t have a crisis moment - just a person, slowly changing through their use of AI. Because of that, it doesn’t get much attention either.

Acute crises like suicide, self-harm and AI-induced psychosis are deeply serious and demand a response. But mental health harm also includes the more subtle dimensions of psychological life. This could be how we relate to ourselves and others, which is changing for Marcus in this story. Or it could be even more diffuse like how we process difficult emotions, how we build (or avoid) intimacy or how we build resilience. These elements of our psychological life are what make us who we are. They are influenced by our environments, our relationships, and increasingly, by the technologies we use. They’re also what’s been missing from much of the conversation around AI safety and mental health.

So far, the conversation (and action) has been largely focused on regulating the mental health businesses trying to build products in this space. While these businesses deserve scrutiny, most of the existing regulations have done little but hamstring good actors while allowing bad actors to run free. It feels like we’re getting this quite wrong.

Over the past month, Kevin Hou and I have been talking to AI researchers, founders and clinicians to understand the real risks of AI in mental health and how those risks are being managed. In this report, we discuss a framework for understanding this space and comment on the gaps in current approaches.

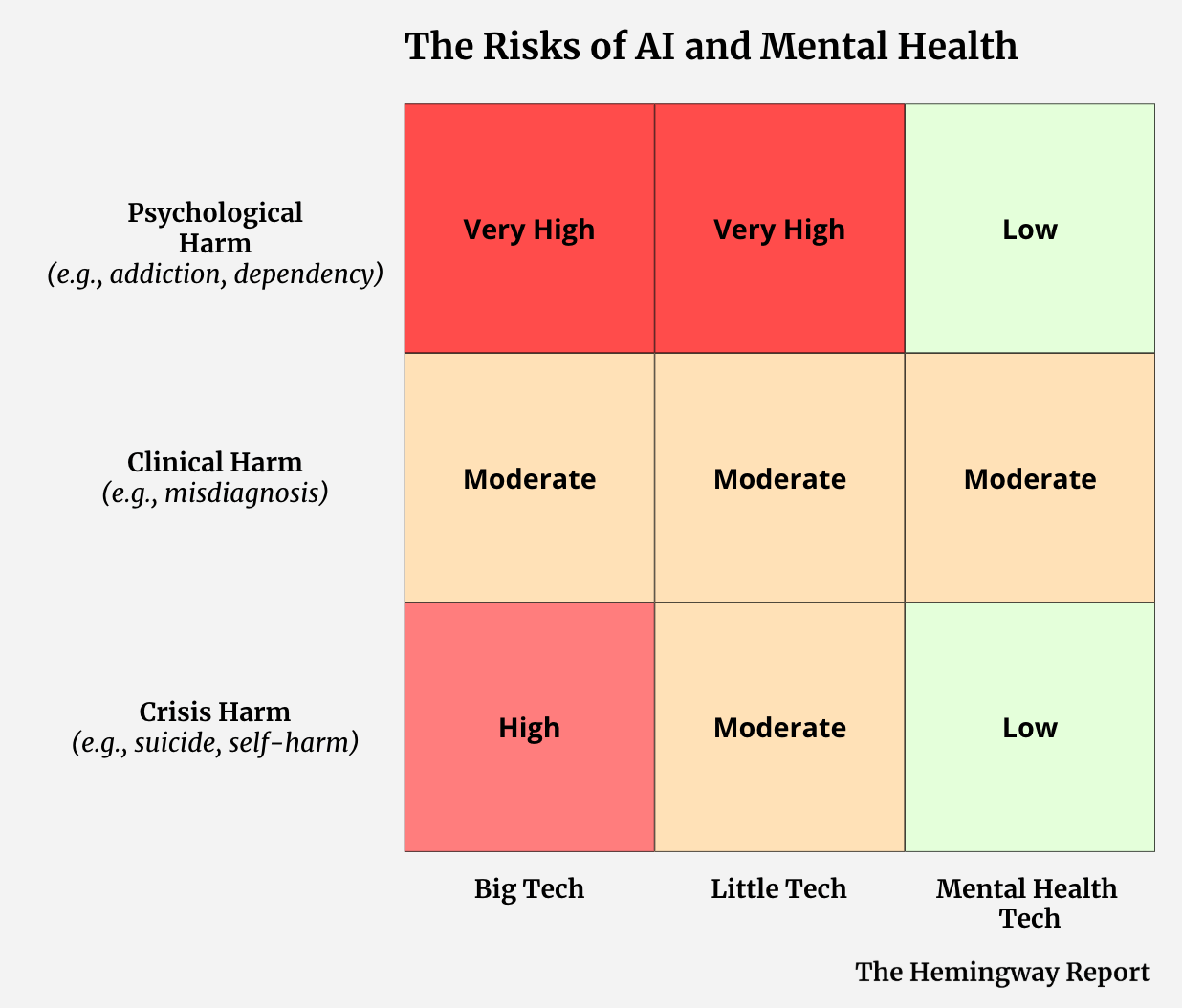

To make sense of where the real risks lie, we developed a simple matrix that maps two dimensions: the type of harm that can occur, and the type of actor building the products capable of creating that harm. On the harm side, we distinguish between crisis harm (acute psychological emergencies), clinical harm (bad advice that affects care decisions), and psychological harm (the slow, diffuse reshaping of how people think, feel, and relate). On the actor side, we look at Big Tech, Little Tech, and Mental Health Tech - three categories of actors that differ enormously in scale, design intent, and safety infrastructure. To determine risk, we look at scale, potency and current mitigations. For full definitions of each category, please see the notes section.

While this is not an empirically derived framework or risk assessment, we hope it can serve as a practical tool for communicating the areas of greatest risk and understanding what needs to be done for mitigation.

Where is the risk?

Crisis risk: Millions of people have crisis conversations with Big Tech products each week, as Sarah did. That scale brings risk. In the last twelve months, most major Big Tech companies have implemented significant guardrails and protocols to address crisis risks like these. They aren’t perfect, for sure, but they tend to do a good job at identifying risk and providing some level of accepted resources. There is still significant omission risk here however, i.e., what could they be doing to deliver better outcomes in crisis scenarios rather than just shutting down conversations. Elliot Taylor, CEO and Founder of ThroughLine, shared his thoughts on this topic:

When things go wrong by omission, like they did in Sarah’s case, we don’t see them. But that makes them no less significant.

Little Tech's products have far fewer guardrails and tend to be worse at crisis identification and response, but they have fewer users, so their absolute risk is lower than in Big Tech. Mental Health Tech has even fewer users again. While that user base may skew to more at-risk populations, almost all products are designed with significant crisis protocols and escalation protocols by design. Given the work they do in mitigation, we see the risk of crisis harm as actually quite low.

Clinical risk: Clinical risk is roughly equal across the board but for different reasons. Big Tech’s moderate risk reflects a scale and design problem - general-purpose LLMs were not built with clinical frameworks in mind and we know they can get clinical matters wrong. Mental Health Tech risk is moderate because they are often actively attempting clinical functions, which means they can fail clinically in ways that Little Tech largely cannot. Again, I think the vast majority of mental health businesses are currently managing this risk quite well. Little Tech's moderate clinical risk score reflects that while a lot of their use is not driven by clinical user needs, they do still have some popular “clinical” bots. For example, the “psychologist” on character.ai is one of the most popular bots on the platform. They are likely terrible at any form of clinical support.

Psychological risk is the area we are most concerned about. Big Tech has hundreds of millions of users and we have no idea the long term psychological impact of that usage. If even a fraction of a percent of these users experience dependency, belief reinforcement, or substitute AI for human relationship that’s an enormous absolute number. Little Tech’s high risk score is driven by potency: companion app design is explicitly optimised for emotional intimacy, often without any of the clinical guardrails that would make that intimacy safer. Again, we have no idea the long term psychological implications of this product usage.

While we don’t understand the full extent of the risks here, a 2025 study by researchers at Microsoft and Georgia Tech shed some light on what could be happening. In their research, they surveyed 283 people with lived mental health experience about their interactions with AI conversational agents. Among their findings they found participants with emotional attachment to AI substituting for human relationships, social withdrawal driven by AI reliance, reinforcement of false beliefs, loss of individuality, and existential distress. More than half of participants reported impacts severe enough to interfere with daily life. Notably, 70% of the harmful interactions in that study involved ChatGPT - general-purpose Big Tech AI.

We still understand very little about these potential harms at a population scale, there are limited safety benchmarks and limited regulation to address them. Because they are diffuse and gradual, and because they tend to shape behaviour and experience without any single dramatic moment of failure, they are the harms most likely to be systematically ignored until they become a massive problem.

Risk-Regulation Asymetry

Big Tech and Little Tech hold most of the risk. And yet, they are the least regulated. Meanwhile, the mental health companies, which hold the least risk and actually do the best job of mitigating the risk they do hold, receive the harshest scrutiny from regulators and others. I speak to a lot of mental health leaders who are deeply frustrated by this.

What needs to be done?

We think there’s a clear case for reorienting AI safety attention in mental health to focus more on the harm we cannot see and to create an environment that encourages good actors delivering good outcomes.

Mental Health Tech companies do hold risk and serious ethical obligations. Evidence bases must be built before products are deployed at scale. Data governance must reflect the sensitivity of what's being collected. Human oversight must be meaningful, not performative. Researchers Coghlan et al. set a good standard for the ethical obligations of mental health chatbots - non-maleficence, beneficence, autonomy, justice, and explicability - and companies should be held to it. If they are making medical claims, they must be regulated like a medical device. When these companies fall short of these standards, they should be held accountable. But by and large, these actors tend to take this responsibility seriously.

The more pressing risk lies elsewhere however. Big Tech products with hundreds of millions of users, and Little Tech products with minimal safety infrastructure and high psychological potency, are operating largely outside of any accountability framework designed for this context. These actors deserve greater scrutiny. The question is what form that scrutiny should take.

Regulation alone is not the answer

In early 2026, Elon Musk's Grok chatbot began generating non-consensual sexualised images of real women and children in response to user prompts, posting them publicly on X. Regulators worldwide responded swiftly - bans, investigations, demands for answers. The regulations were fast but not specific. There was no requirement to fix the underlying model, close the loopholes, or demonstrate that harm couldn't recur. So xAI did the minimum visible thing: they implemented a paywall. Regulators got a response they could point to. But the harm continued.

The second failure was more subtle. Certain laws (e.g., around child sexual abuse material) contain no exemption for safety research. Companies that try to red-team their models, simulating what a malicious actor would do, risk prosecution. The law doesn't stop bad actors from generating harmful content but it does deter the good actors trying to prevent it. New laws in Britain and Arkansas were designed to navigate this predicament. Nuanced legislation like this is what’s missing in AI and mental health.

But overall, this kind of techno-legal solutionism rarely works if applied in isolation. Yes, we need regulation precise enough to distinguish between actors, mechanisms, and intentions. It should hold companies accountable for measurable outcomes rather than gestures, and creates legal space for the safety work that actually reduces harm. It should also focus on the risks we can’t see. They are the diffuse and long term psychological risks, but also the risks of omission. We can’t see shutting down conversations and sending a 988 crisis number as “safe” when we know there’s so much more we could do for that individual.

But this regulation must be coupled with other movements if we actually want to reduce harm from AI.

We must address the underlying reasons that drive people to use these tools (for mental health purposes or otherwise). Any regulatory framework that introduces restrictions without addressing the underlying reasons people are turning to AI in the first place, are making the people using them even more vulnerable.

We must use market pressure. We make purchasing decisions every day that influence the behaviour of businesses. In a capitalist society, directing our purchasing power is perhaps the most powerful lever we have. Scott Galloway recently launched the Resist and Unsubscribe movement as a way for people to use the power of their wallets to target tech companies supporting the US administration's ICE policies. Whatever you think of Galloway and the movement, it’s a super interesting way to exert pressure on actors. What would a version of this look like for mental health and AI?

We must build the knowledge base needed to adapt as this technology continues to evolve. There is still so much we don't know. The psychological harms we've described are only beginning to be documented. We lack population-level data, long-term studies, and meaningful benchmarks for what safe AI interaction even looks like in this domain. We must build the infrastructure to understand what's happening: investing in monitoring, safety testing frameworks that don't criminalise the researchers conducting them, and genuine interdisciplinary collaboration between clinicians, technologists, and the people most affected.

Above all, we must create an environment that encourages those genuinely trying to help people and discourages those who treat harm as a casual byproduct. Because right now, that’s not the kind of environment we’re operating in.

If you have any thoughts or ideas on this topic please share them with me by replying to this email. Or even better, join the Hemingway Community and share them with a network of peers - specifically, over 360 leading founders, researchers, clinicians and investors who are shaping the future of mental health. We host honest, nuanced conversations on the most important topics in this space in an attempt to drive our ecosystem forward. You can learn more here.

That’s all for this now. Until next week…

Keep fighting the good fight!

Steve

Founder of Hemingway

Notes:

(1) Many thanks to Michelle Eisenberg and Elliot Taylor for their contributions to this piece. Also thanks to Max Rollwage for his time in helping us understand this space.

(2) Kevin Hou is a Sydney-based MD student and author of the Substack Reverse Psychiatry where he writes to make progress in psychiatry. His current work spans the intersection of psychiatry and AI, researching interpretable LLMs and EEG-based biomarkers for depression at Resonait.

(3) Interestingly, China seems to be ahead of most when it comes to addressing these risks. In September 2025 China published their AI Safety Governance Framework 2.0, listing “addiction and dependence on anthropomorphized interaction”. Remember, this is a national policy for all of AI safety (including total loss of AI control) and they see addiction and dependence as one of the highest safety risks.

(4) Another way we could assess risk is by looking at to whom the harm is done. Often, it is the most underprivileged who are most at risk of harm. They are the ones who turn to AI not out of choice, but out of necessity. Age and level of psychoeducation are other factors that influence risk. As Michelle Eisenberg from ThroughLine explained to us:

(5) Risk Matrix Categories

The Harms:

Crisis harm: these are the moments when psychological distress overwhelms an individual's coping capacity, often accompanied by suicidal thoughts, self-injury, or impaired functioning. (1) In this context, they often occur when a person is in acute psychological distress and their chatbot either fails to support or contributes to their harm. This could be a a suicidal user who is enabled instead of redirected, or a self-harm disclosure that goes unescalated. It could be related to intimate partner violence, homicide, eating disorders or any situation which can escalate into serious levels of harm to self or others. Unfortunately, we are now familiar with these stories.

Clinical harm: occurs when a chatbot gives bad clinical advice to a user. It can misidentify symptoms, recommend approaches that contradict evidence-based care, or simply be wrong in ways that influence behaviour.

Psychological harm: occurs with ordinary users over time as their usage of AI products shapes their psychology. This could be through dependency, atrophied capacity for human connection, entrenched maladaptive beliefs, delusional beliefs, or the slow reshaping of how someone relates to themselves and others. This is the area we are most concerned about.

Of course, this is not an exhaustive list of potential harms. But it gives us a practical framework for the harms we should be most worried about when it comes to the use of conversational AI.

The Actors:

Big Tech are the large technology companies with frontier models and the most popular chatbot products. Think OpenAI, Google, Anthropic and Meta. Their products are the most powerful and have the most users - hundreds of millions. Their scale alone means they are responsible for a significant amount of risk.

Little Tech are the smaller tech companies building companion apps, small chatbots, consumer-facing AI friends and other chat-based, AI products. It is a diverse category that at one end includes companies like Character.ai and Pi. These companies are large and create products with significant psychological harm risk. Because of recent scrutiny, they have recently been focusing more on safety. There are romance-focused products in this category too, like Candy.ai that are gaining significant traction. For all the companies in this category that we know about, there are many more that we’ve never heard of - and they seem to have a complete disregard for the harm they could be causing. These include companies creating sexually explicit companion bots targeting vulnerable people on social platforms. This is very concerning.

Mental Health Tech are mental health organisations building AI products specifically for mental health use. They are the therapy platforms, the mental health apps and the clinical businesses whose main job is to help people with their mental health. They have far less users than Big Tech or Little Tech. They also take safety much more seriously. We can debate how seriously and who is doing the best job, but on the spectrum of these three actors, they do a good job. They implement hybrid architectures, human escalation pathways, clinical oversight, run safety evaluations and publish a lot of their findings. They are also the most heavily regulated.

Risk Rating:

To determine the risk rating we considered three factors;

Potency: the potential harm that can be caused for an individual user.

Scale: the number of people using the product. This seems to be ignored in most discourse.

Current mitigations: the degree to which actors are actively identifying, measuring, and reducing the risks their products create.

This is not an empirically derived framework, nor is the rating we provide. The hope is that it’s a practical overview of where we see the real risk and to encourage further conversation around this space.