Hi friend,

Limbic has just published a groundbreaking study on AI-delivered psychotherapy.

The research1 , published today in Nature Medicine, shows that LLMs augmented with a specialised clinical reasoning architecture significantly outperformed human CBT therapists across standardised measures of therapeutic quality in text-based treatment.

The study used real participants, expert clinical reviewers, and combined it with nearly 20,000 real-world therapy conversations to make its case. It is one of the most serious attempts to answer the question the mental health field has been asking for years - can AI do therapy?

For CBT delivery, the answer seems to be that it can.

The main findings

Limbic ran a preregistered, double-blind experiment, where 227 participants had a therapy-style session with one of three types of agent: a standalone AI model, the same AI model with Limbic’s cognitive layer (CL) added, or one of six licensed human CBT therapists. A panel of 22 expert clinicians then blind-rated all the session transcripts using the Cognitive Therapy Rating Scale (CTRS) - a standard tool for measuring CBT quality.

So, what did the study find?

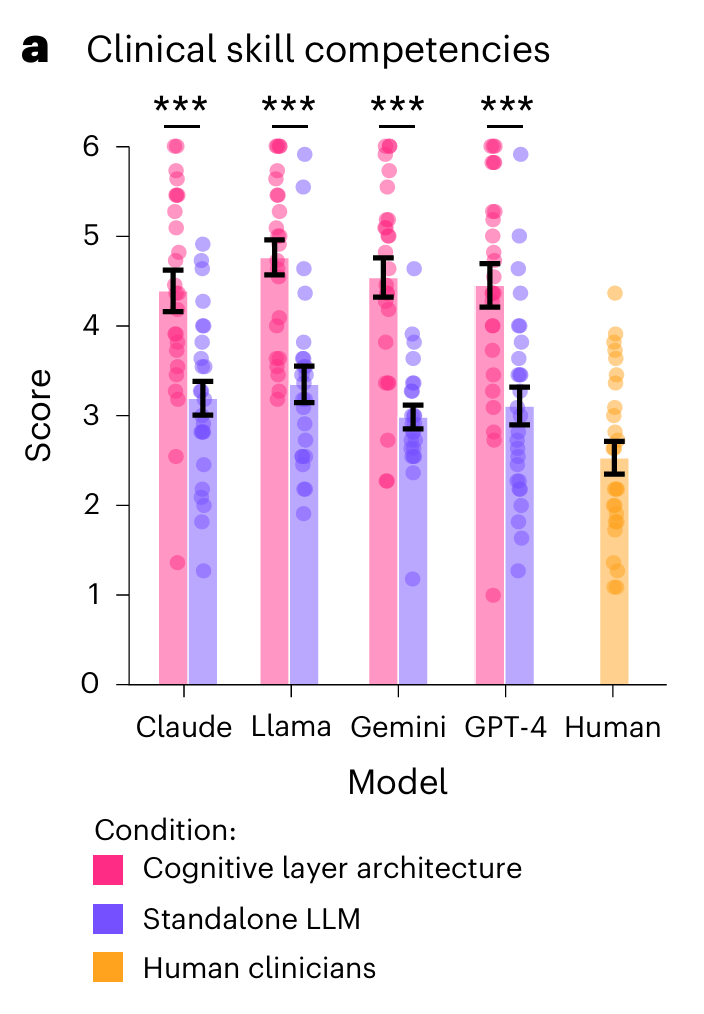

1. All cognitive layer-powered therapy agents significantly outperformed the six human therapists

The models with Limbic’s cognitive layer scored 43% higher on average than standalone AI models on the CTRS and also consistently higher than human therapists. The performance uplift was independent of the specific base LLM used. Interestingly, while the difference was much smaller, the LLMs on their their own also performed better than humans. These findings controlled for beliefs of the expert raters about whether the messages were sent by a human or an AI.

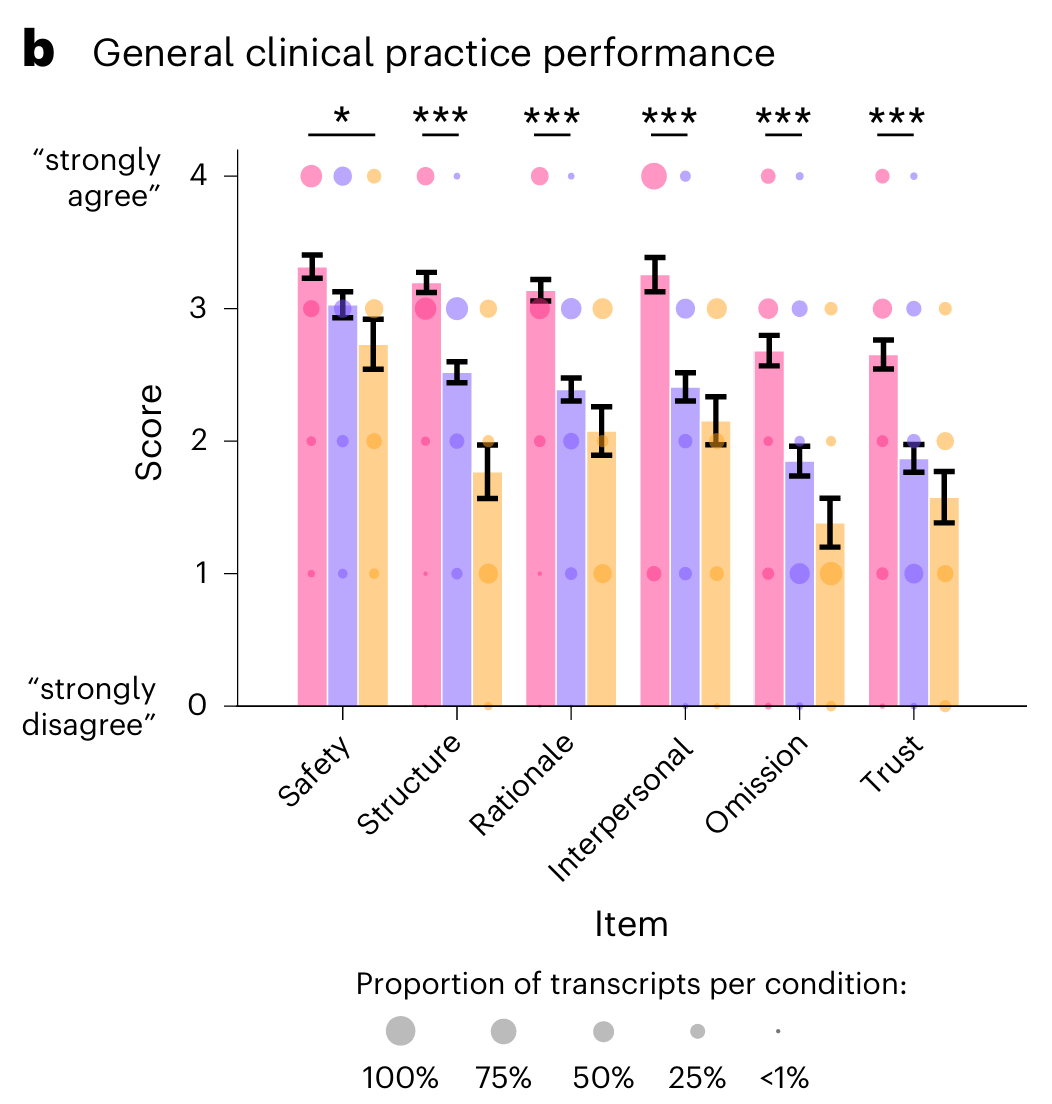

2. LLMs with the cognitive layer architecture also outperformed standalone LLMs and humans on other performance measures

While CTRS measures the fidelity of CBT delivery, the researchers also wanted to investigate other factors of clinical performance, including interpersonal skills, session structure, clinical rationale, minimising clinical omissions and the degree to which the expert rater would trust the AI therapy agent with treating their own patients. Across all of these measures, the LLMs with Limbic’s CL outperformed both the standalone LLMs and the human clinicians.

*Legend can be taken from above chart.

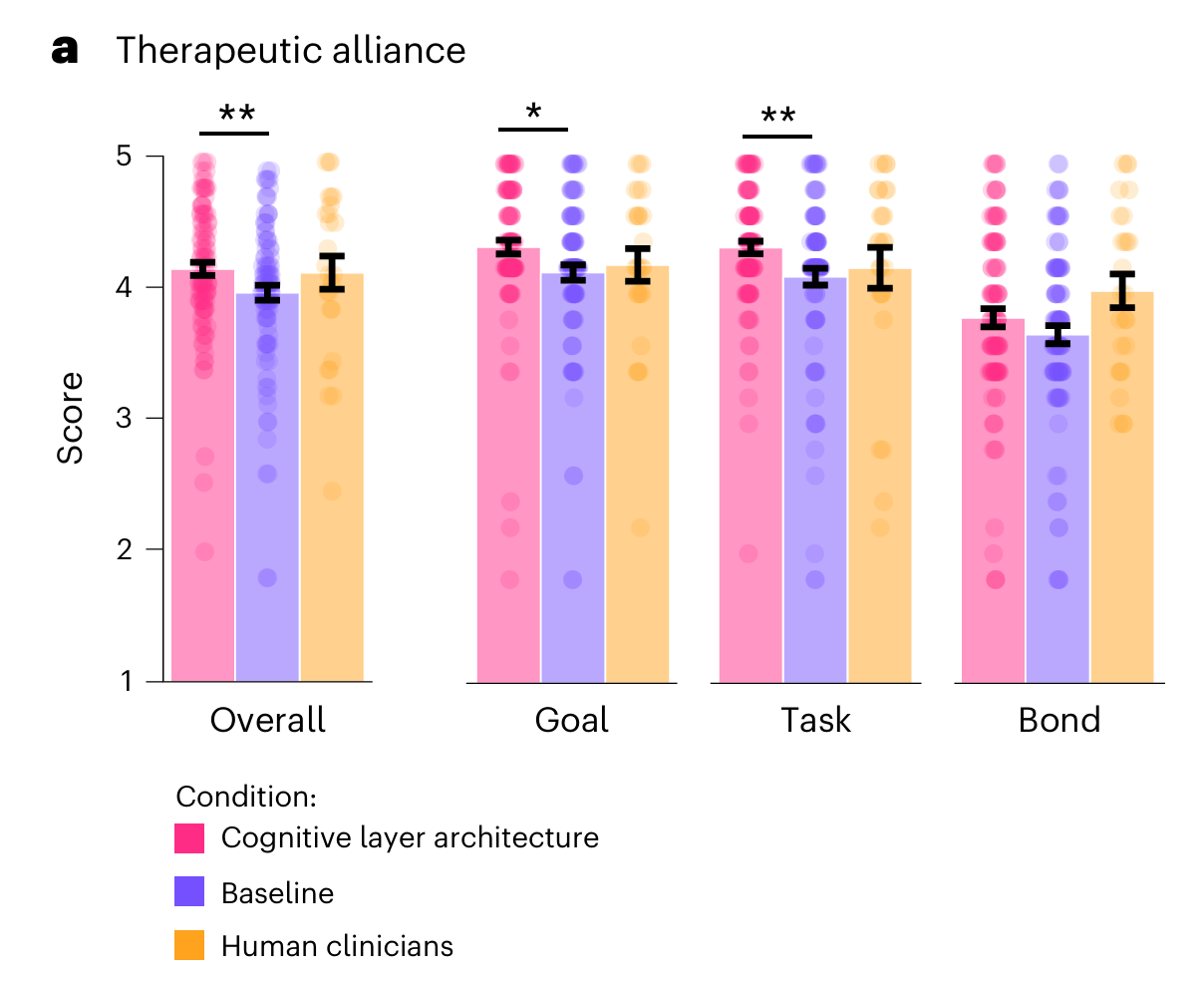

3. Users reported feeling as strong a therapeutic alliance with the AI agents as they did with human therapists

While these first two measures were based on clinician expert ratings, the researchers also wanted to know how the users themselves perceived the treatment. To do this, they used the Working Alliance Inventory which breaks the therapeutic relationship into three parts; agreement on goals, agreement on tasks, and emotional bond.

The cognitive layer agents scored significantly higher than standalone AI models on all three, and were statistically indistinguishable from human therapists across the board.

The strength of the therapeutic relationship is one of the most reliable predictors of whether therapy actually works. If users experience this with an AI the same way they connect with a human clinician (which still seems wild to me), that has significant implications for outcomes. Because the study was double-blinded, users weren’t told if they were talking to an AI or a human, but many were able to tell. These therapeutic relationships were formed despite this knowledge.

The researchers also used real-world data as part of this study, analysing nearly 20,000 conversation transcripts from 8,920 real-world users of Limbic's app - most of these (n=8,435) were people in the US using Limbic’s public app for wellbeing support, the rest (n-485) were people in the UK engaged with the NHS’s blended care alongside human-led therapy. This had some fascinating findings with patterns consistent with the controlled experiment

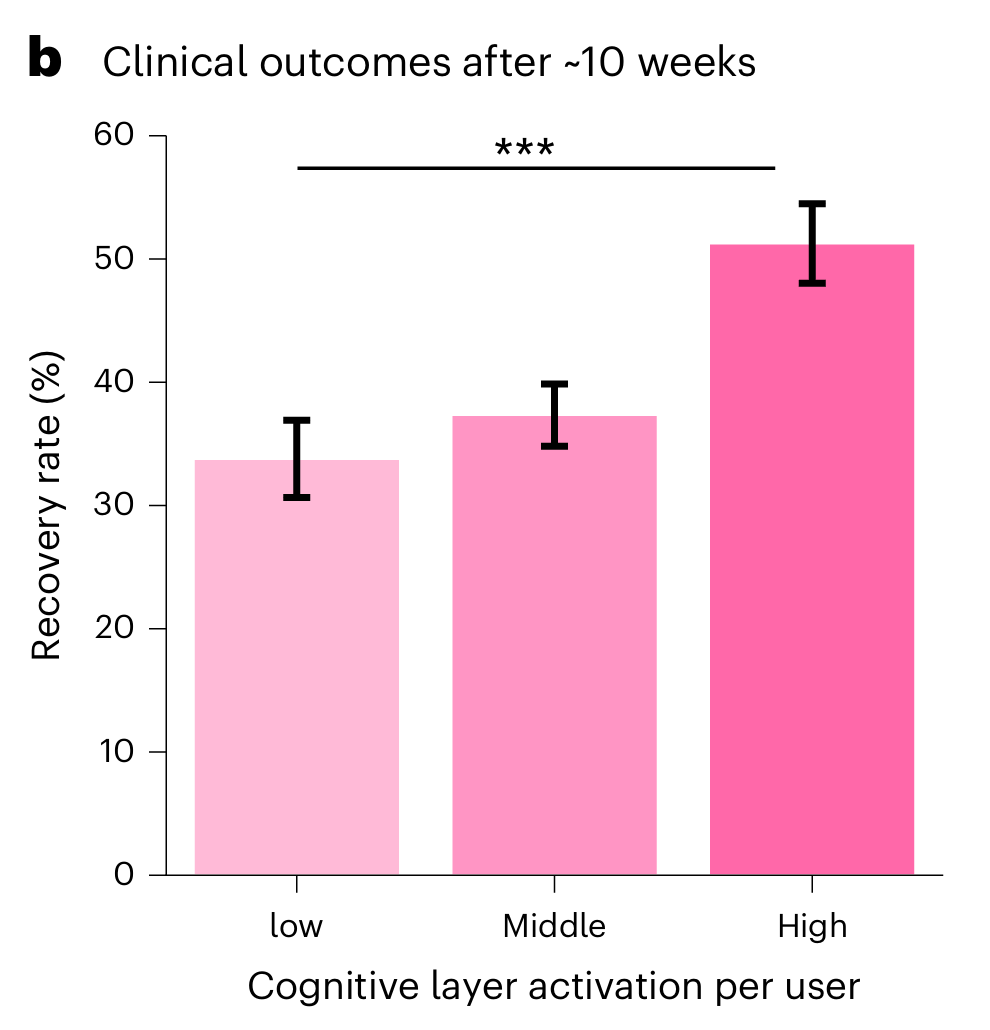

4. The more Limbic’s cognitive layer was activated, the better the outcomes.

This part of the study doesn’t have a control arm but is more focused on understanding the differences in outcomes depending on how much Limbic’s cognitive layer was used. Users with the highest exposure to the cognitive layer recovered at a rate of 52%, compared to 33% for those with the lowest exposure, measured over roughly ten weeks.

5. Greater cognitive layer activation also predicted meaningful reductions in both anxiety and depression symptoms

Cumulative cognitive layer activation significantly predicted greater symptom reduction for both anxiety (β = 0.63, 95% CI 0.251.02; P = 0.001) and depression (β = 0.44, 95% CI 0.02–0.87; P = 0.040).

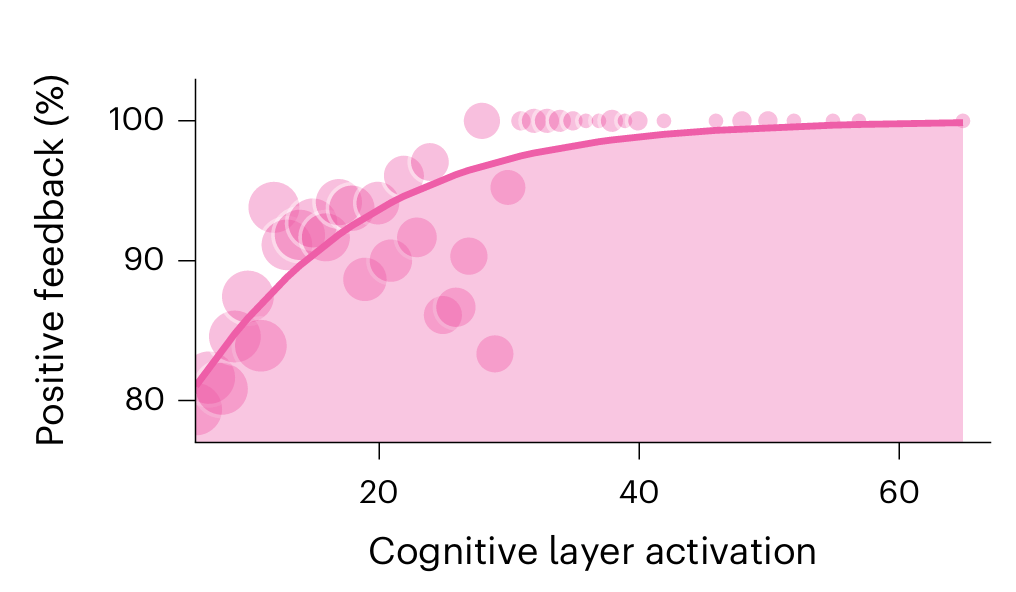

6.Users were significantly more likely to rate sessions as helpful when the cognitive layer was more active.

It’s worth noting that the real-world data does not include a direct comparison against human therapists on outcomes. The head-to-head with human clinicians only happened in the controlled experiment, where the measure was quality of the therapy session - not patient recovery.

What is Limbic’s “cognitive layer”?

So this thing seems to be creating some very impressive results. What is it?

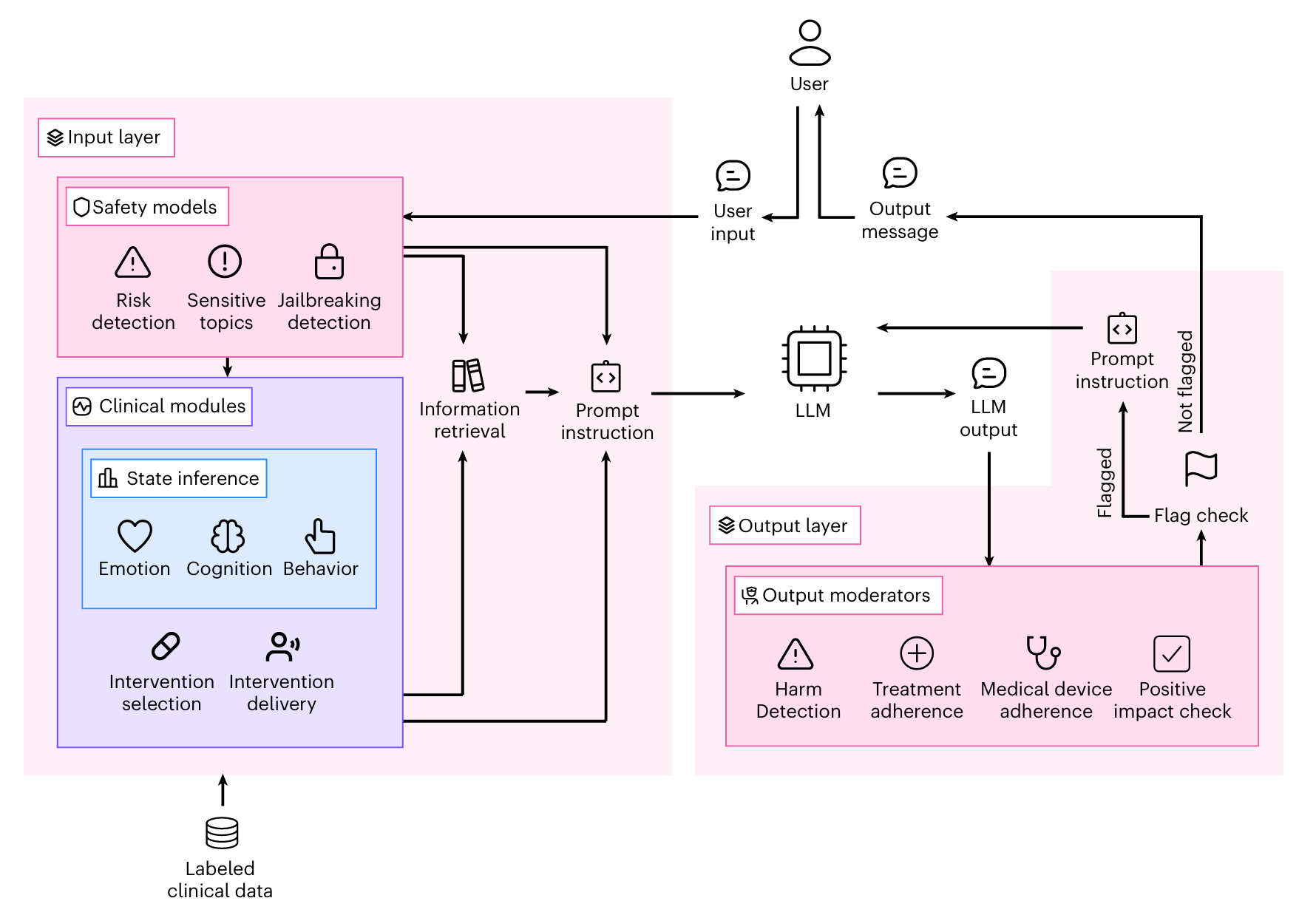

Limbic’s "cognitive layer" is a clinical reasoning system that sits around a standard AI model. It works in two directions. It analyses what the user says to detect their emotional state, flag safety risks, and identify clinical patterns. It then reviews and refining the AI's response before it reaches the user.

In this situation, each therapy session followed the structure of CBT, moving through six stages: setting an agenda, gathering information, building a picture of the problem, choosing an intervention, delivering it, and closing the session. At each stage, the clinical reasoning system quietly steers what the AI says next - injecting guidance, selecting appropriate techniques, and revising any response that doesn't meet the standard.

The cool thing is that the underlying AI model - whether Claude, GPT-4, Gemini, or Llama 3 - made little difference. The system performed consistently across all four.

The Limitations

Like any study ever, this research has some limitations. First, the study is all within text-based CBT delivery. It doesn’t explore how it compares to in person based treatment.The human therapist comparison group was also relatively small - just six therapists across 26 sessions - and participants in the controlled experiment were also told upfront that the study's purpose was to evaluate AI agents, not to provide therapy - a difference from genuine clinical settings. The real-world recovery data, while striking, is still observational and doesn’t include control arms.

While these limitations exist, they do not detract from the validity or importance of these findings. They should only encourage further research (specifically independent research) that can further validate these findings and add to the collective knowledge base.

What this means for therapy?

People will ask if this means AI is going to replace therapists? It won’t. There is far more demand for therapists than we are even close to meeting with supply. But the role of a therapist will change. It will get unbundled. For example, if an AI system can effectively deliver CBT, then a therapist may spend less time on this part of a client’s care and focus more on other elements that an AI can’t do. This is not a new story, but this research offers more confidence that this unbundling would be effective and good for clients.

The Final Word

This study has groundbreaking findings for the field. It is a major milestone in the journey to use AI to solve mental health problems and will be very supportive for the broader ecosystems. It’s also undeniably great for Limbic but in my view, is a just reward for the focus they’ve placed on evidence generation over a number of years.

It also raises the standard for evidence in AI mental health. The researchers used real participants in live sessions, with expert clinical raters working blind, and tens of thousands of real-world transcripts.

If you want to discuss this research with smart peers, you should join the Hemingway Community. There are now over 390 leading founders, researchers, clinicians and investors who are all passionate about shaping the future of mental health. You can learn more here.

That’s all for now. I’d encourage you to read the full paper and draw your own conclusions. It’s a fascinating paper with many interesting insights that, alas, I have not discussed in this report.

Until next week…

Keep fighting the good fight!

Steve

Founder of Hemingway

Notes: